As part of Ontario's broader digital transformation of public services, this project explores how conversational UX can strengthen user confidence and accessibility within ServiceOntario. While essential services such as driver's licence renewal and vehicle registration are available online, many users still experience uncertainty, navigation friction, and low trust when completing complex government tasks digitally.

Add your body text for slide 1 here.

↑ click to explore the articles i used in research

| Heuristics | How to improve | Rationale | Examine Current version |

|---|---|---|---|

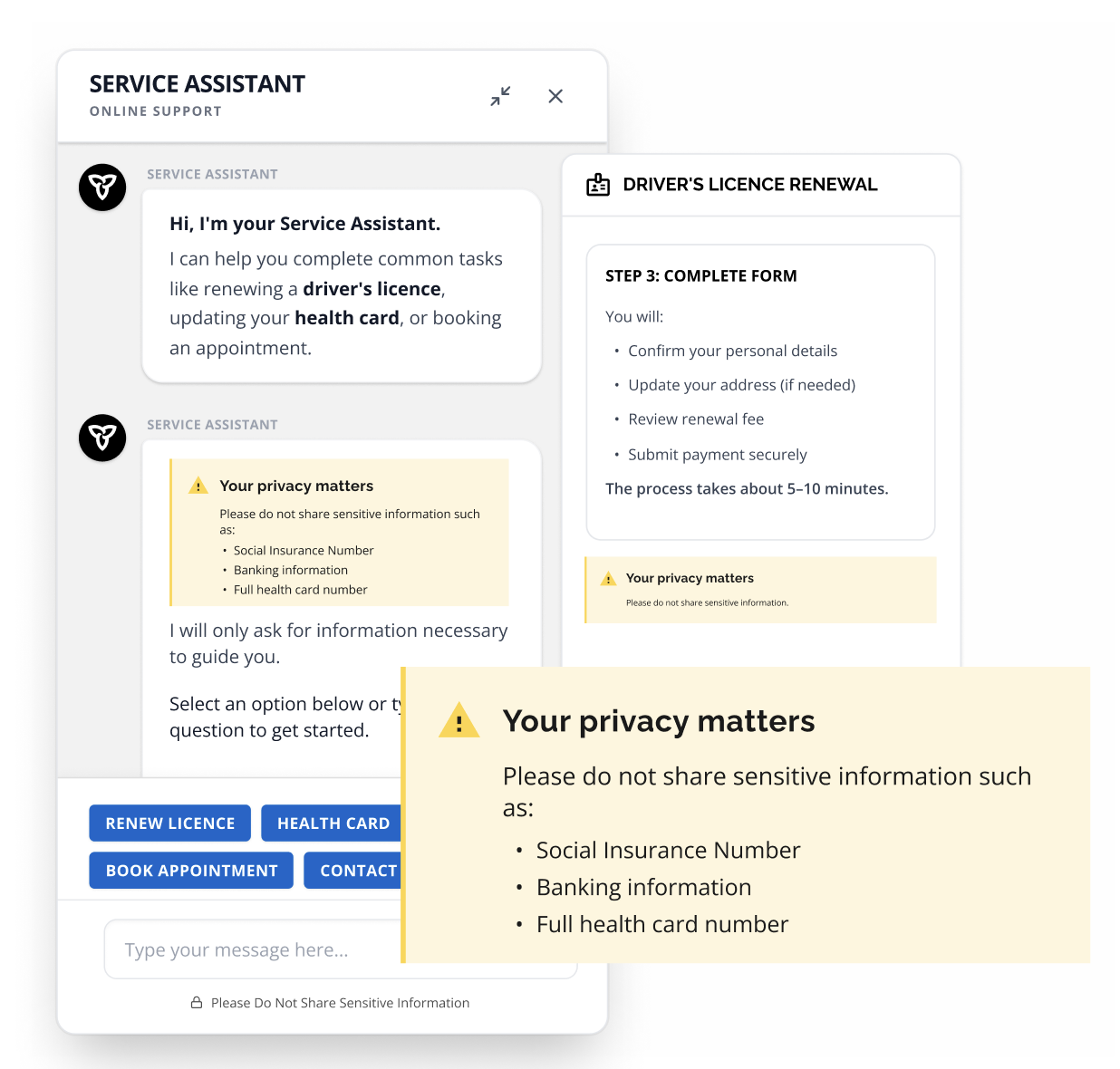

| Privacy | Ask for consent for privacy policy regulations inside the chatbot application before conversation begins. | ServiceOntario regularly handles confidential and personal information of Ontarians. It is important to ensure whether this data is safeguarded (Digitalization of ServiceOntario). | It does ask for consent, but it appears after the user starts chatting with the bot. |

| Accuracy |

Ability to access official government database and

provide authoritative answers and instructions. 1. Use step-by-step cards with one clear CTA (e.g., "Go to renewal page") instead of long paragraphs. 2. Fallback UI: if unsure, say it cannot confirm and offer human contact options (call-back/phone/email). |

"The information must align with what government provides and must lead users to the correct place." (Wang, Zhang & Zhao, 2022). | ✅ Language of use could be improved. Some responses are kind of paragraph-heavy. |

| Consistency (Visual Hierarchy) | Provide menu buttons at the bottom of the chat. Provide "Suggested next intents" chips after each answer. Maintain consistent tone and visual hierarchy. | Based on 12 heuristics for analysis of conversational interfaces (Höhn & Bongard-Blanchy, 2021). Chatbot should use domain model from user perspective and maintain personality and consistency in language and style. |

1. Current chat is

split and inconsistent. 2. Font does not stay the same (option menu vs instruction text). 3. Color scheme does not match website. |

| Effectiveness (Accessibility) |

1. Ability to answer questions 24 hours. 2. Provide detailed instructions after giving official answers. |

24-hour direct access to services through digital self-service is a ministry primary goal (2024–2025). | ✅ |

| Trustworthiness |

1. Implement simple language instead

of extended official language (including FAQs). 2. Do not only offer restricted answers; balance open and closed questions. 3. Improve conversational continuity. |

User acceptance of chatbots can be influenced by perceived humanness (Understanding Chatbot Adoption in Local Governments). | Does not use "I" pronoun. Offers menu buttons but conversational continuity could be improved. |

| Recovery |

1. Offer restart conversation option. 2. Fallback UI: if unsure, clearly state uncertainty and offer human contact options. |

Framework by Höhn & Bongard-Blanchy (2021) on conversational recovery and user control. | No clear restart mechanism. Limited recovery flow. |

| User Control & Flexibility | In-page, resizable chatbot panel. Minimize external page redirection. | For user retention, chatbot should minimize external links and maintain persistent context view. | Current version opens external pages and is not flexible. |

|

Minor Heuristics (VanHauer & Raimer, 2022) |

A: From simple FAQ answers to complex multi-turn

conversations. B: Support making appointments. C: Integrate into complete processes (e.g., filing applications, payment plans). D: Ask for feedback at the end of conversation. |

Based on Heuristic Evaluation of Public Service Chatbots (VanHauer & Raimer, 2022). | Limited integration into full processes. Feedback mechanism unclear. |

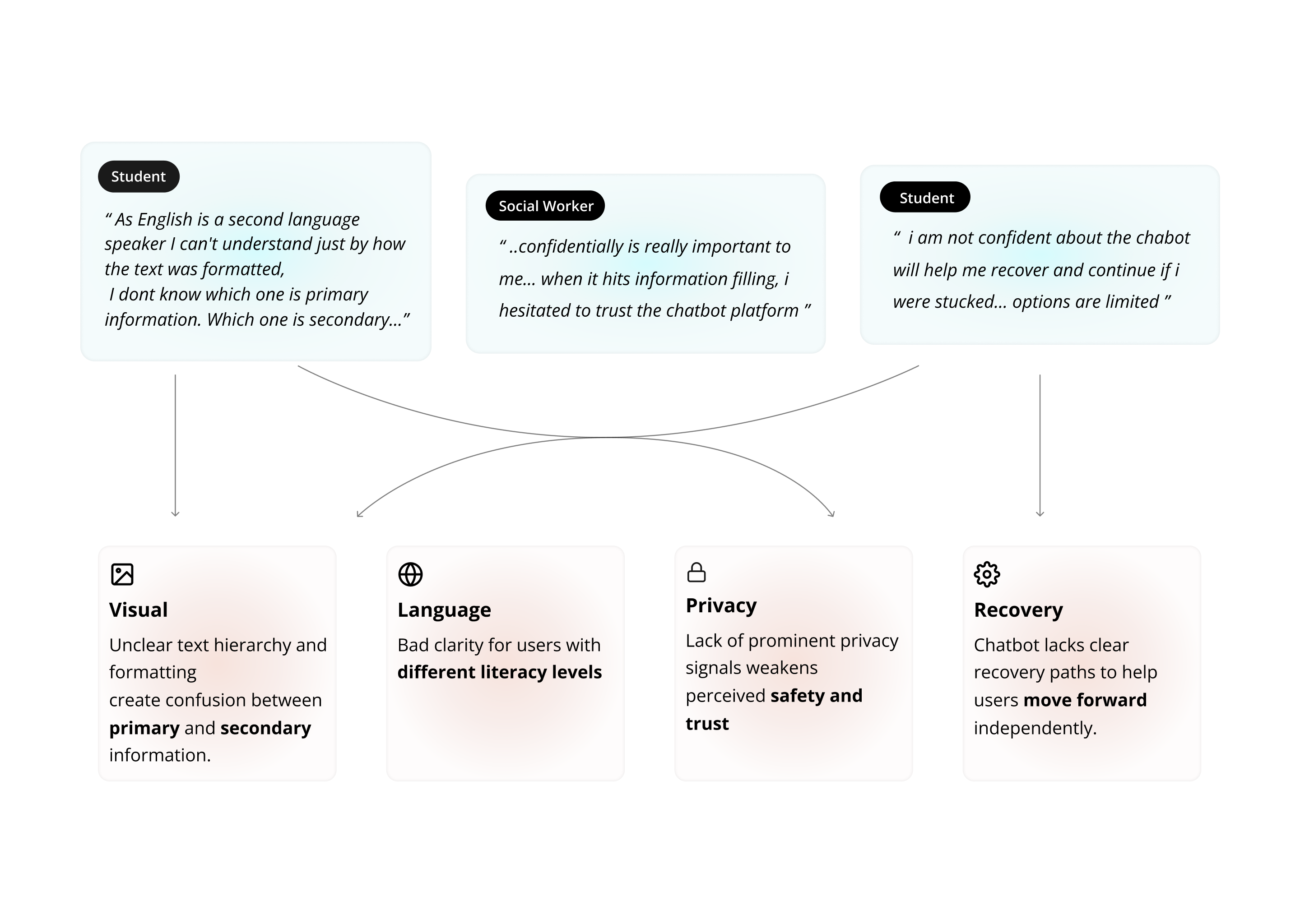

Context: Participants were asked to use the

current version of the ServiceOntario chatbot to

understand the process of renewing a driver's

license.

Purpose: Allow users to experience the

existing chatbot firsthand and examine how it

performs against the identified heuristics.

Test Group: Ontarians with

varying levels of digital literacy. The group

included both a native English speaker and a non-native English

speaker.

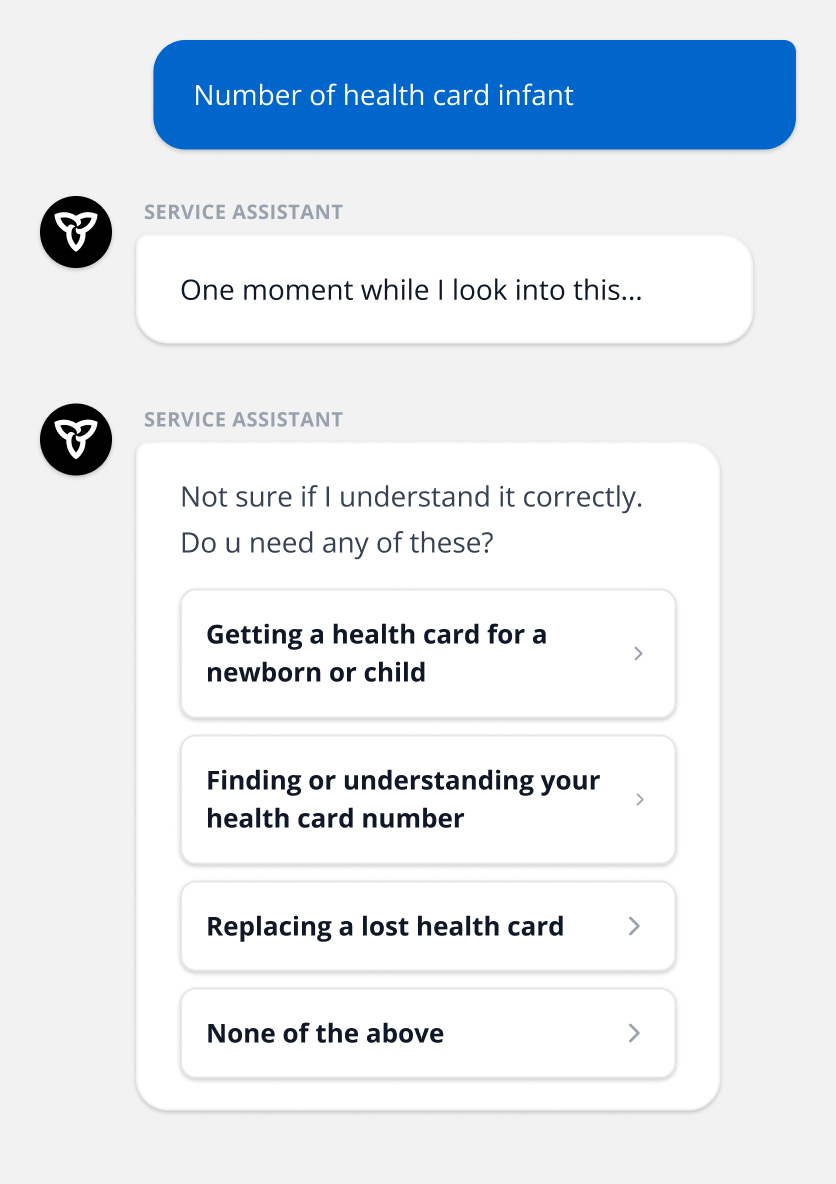

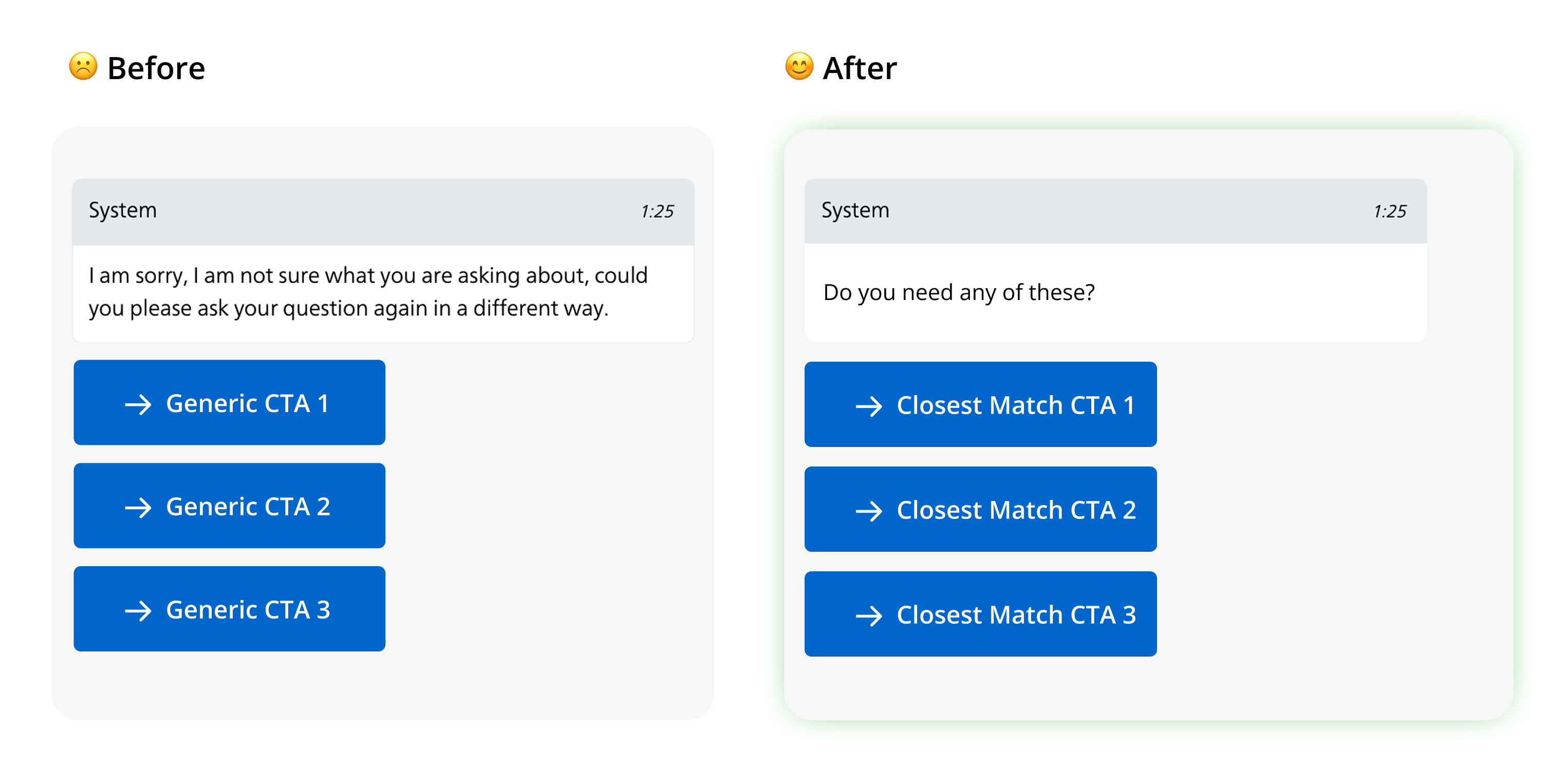

In a government service context, fallback moments are not just system errors — they are moments of user frustration and uncertainty. Instead of relying on vague “I didn’t understand” responses, I redesigned the chatbot recovery experience to guide users toward the closest relevant service pathways.

Recovery design in live chat: